How To ACTUALLY Build an AI UGC Content Machine

Learn how to build an AI UGC factory with Claude Cowork + Arcads, covering pipeline steps, model routing, feedback loops, and dual revenue streams.

Introduction: An Accidental Experiment

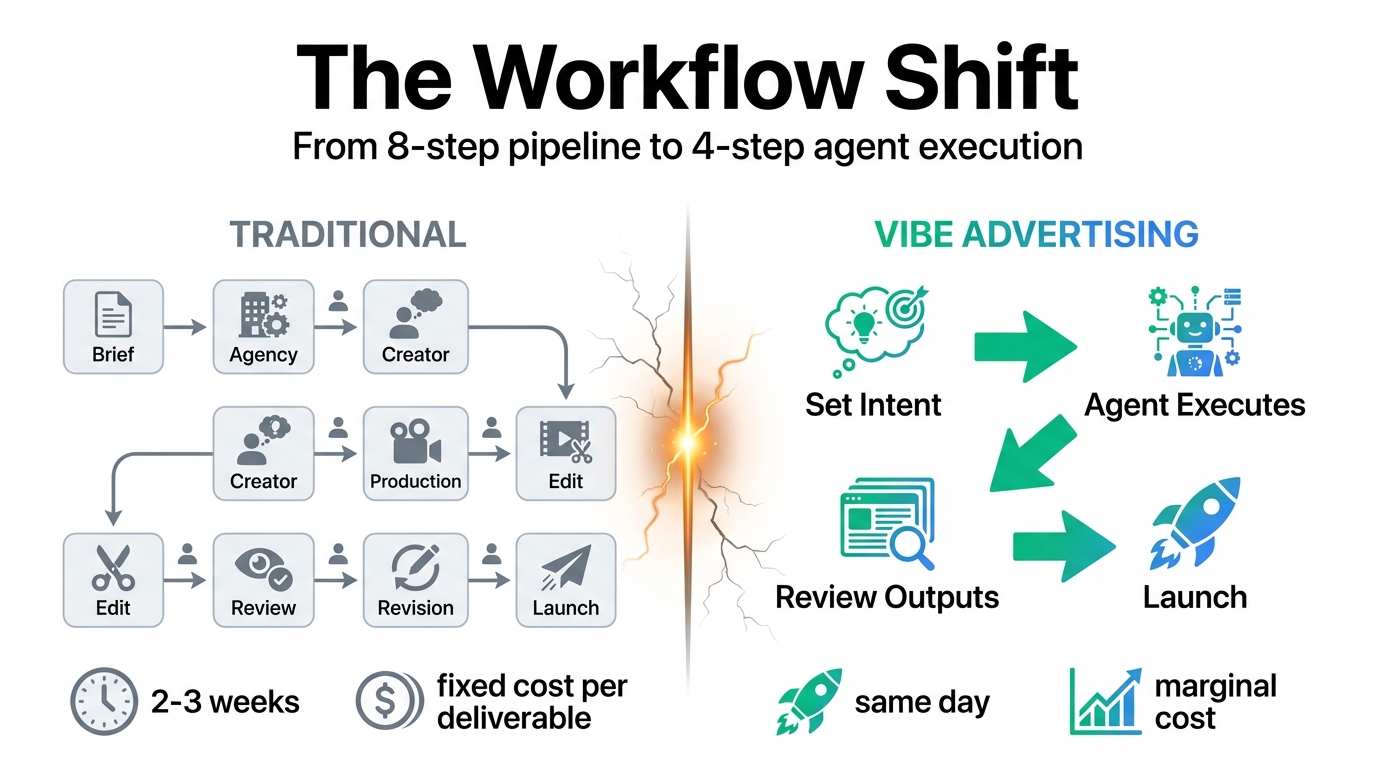

Someone set out to make one UGC creative — a single clip for a product. The normal pipeline: a brief, a creator, a shoot day, an edit, two rounds of feedback before approval.

By the end of the afternoon, they had 47 ad creatives.

They didn't write 47 scripts. They didn't brief 47 creators. They set an intent, described a product, pointed at a target customer, and let an agent handle everything downstream.

This isn't a marginal improvement. It's a fundamentally different operating model.

Most People Are Still Using AI to Type

Most teams treating AI as a creative accelerator are still stuck in a one-output-at-a-time loop:

Write a prompt → generate → review → tweak → regenerate

That's not an AI workflow. That's a human workflow where AI does the typing. You're still the bottleneck. Every micro-decision still needs your call.

The real unlock isn't faster production. It's removing yourself from the production loop entirely.

That's what vibe advertising means: you set the strategic intent at the top — product, audience, tone, conversion goal. Everything below — hooks, scripts, visual direction, model selection, rendering, variations, multilingual versions — runs without you touching it. You come back to finished outputs, not a half-built process that still needs your input at every step.

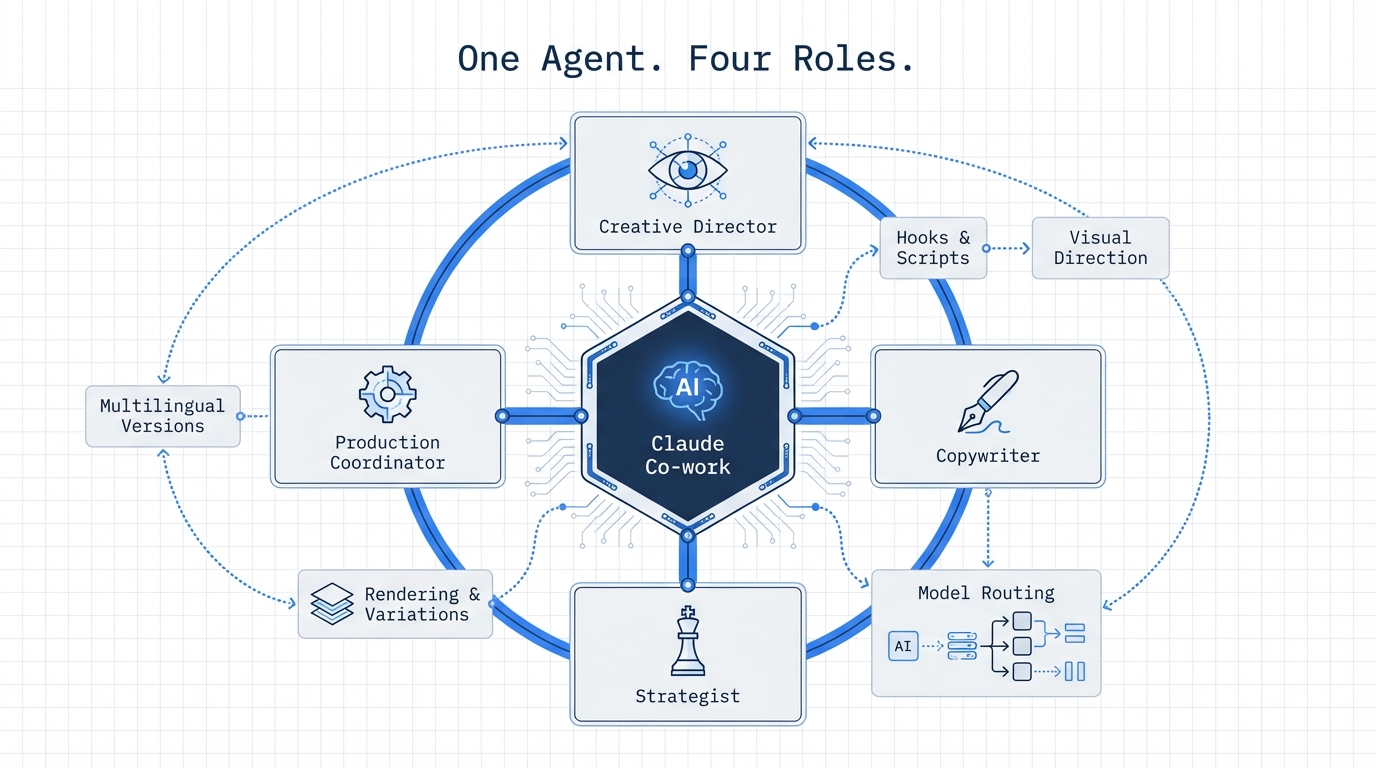

Claude Co-work: More Than a Chatbot

Claude Co-work is Anthropic's desktop tool built for non-developers. Not a browser extension. Not a chatbot you copy-paste into. An actual agent running on your machine, with access to your files, your APIs, your tools, executing multi-step workflows the same way a developer would — except you describe it in plain language and it figures out the implementation.

Most people using it right now are doing light tasks. Summarizing a document. Drafting a reply. Pulling information from a PDF.

What it's actually capable of — when connected to the right backend — is something entirely different.

In a vibe advertising setup, Claude Co-work becomes all of these simultaneously:

- Creative director

- Copywriter

- Strategist

- Production coordinator

It generates the creative strategy, writes every variation, makes routing decisions about which model handles which clip, and calls the Arcads API to execute the renders. You're not in that loop at all until the outputs land.

The Full Pipeline: 5 Steps

Step 1: The Input

Three things. One paragraph:

- Product description

- Target customer profile (written like you'd brief a real creator)

- Tone direction

Example:

"A skincare supplement targeting women 28 to 40. The customer has tried multiple products before and hasn't seen results. She's skeptical of marketing claims but responds to specificity and real outcomes. Tone should feel like a recommendation from someone who had the same skepticism and was genuinely surprised."

That's it. One paragraph. Everything the agent needs to build a full creative strategy.

Step 2: Hook Generation Across Awareness Levels

Claude generates 20 hook variations — not randomly, but structured across psychological entry points:

- Cold audience: Doesn't know the product exists; needs a problem surfaced first

- Problem aware: Knows they have the issue but hasn't found a solution

- Solution aware: Has tried alternatives and needs a reason to switch

- Product aware: Has seen the brand but hasn't converted

Each awareness level demands a completely different creative approach. Cold audiences need pattern interrupts and relatable problem framing. Product-aware audiences need differentiation and proof. Most teams write one version and run it to everyone. That's why their creative fatigues fast.

Step 3: Full Script Structuring with Visual Direction

Each hook becomes a full script — not just dialogue, but:

- Pacing notes

- Visual direction

- B-roll suggestions

- Tone coaching for delivery

- Where the pause should land before the result is revealed

The agent writes these scripts the way a good creative director briefs a creator: specific enough that whoever or whatever delivers it knows exactly what the clip should feel like.

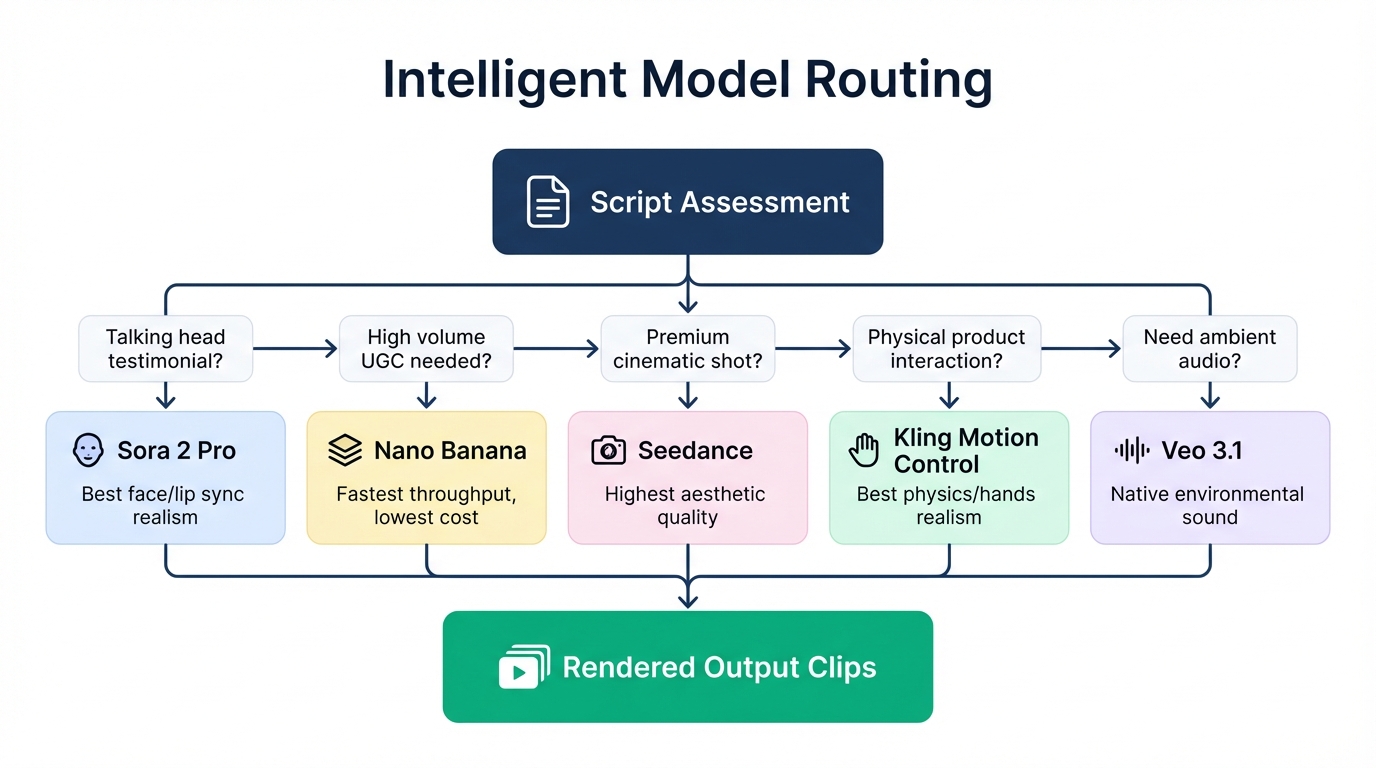

Step 4: Intelligent Model Routing via Arcads

This is where the vibe advertising loop closes.

Claude calls the Arcads API and routes each script to the right model based on what the clip needs:

| Model | Strength | Best For | |-------|----------|----------| | Sora 2 Pro | Most realistic talking-head | Face, lip sync, skin texture, natural movement at full screen | | Nano Banana | High throughput | 20+ UGC-style clips fast; quantity and variety over cinematic quality | | Seedance | Premium aesthetics | Product shots, aspirational lifestyle visuals; can't afford lo-fi | | Kling Motion Control | Physical realism | Hands, textures, product interaction, real-world physics | | Veo 3.1 | Native ambient audio | Environmental sound baked into the clip, no separate VO needed |

The routing logic isn't manual. The agent assesses each script, identifies the shot requirements, and matches it to the model with the strongest output for that specific use case.

Step 5: Multilingual Without Re-shooting

After the first batch renders, the same scripts are re-run in Spanish, Portuguese, French, and German.

Same reference image. Same visual direction. Different language prompt with accent direction baked in:

"Deliver this in Brazilian Portuguese. Warm and conversational, slight regional inflection, natural pace, not broadcaster formal."

Sora 2 Pro handles accent and tonality from the prompt. You're not just translating audio — you're generating a native-sounding delivery in each market from the same character and the same clip structure.

Total output from one afternoon: 47 pieces of ad creative across 4 languages, 3 model types, and 4 awareness levels.

Why Arcads Is the Right Backend

This pipeline works because Arcads puts every major generation model behind a single API.

Veo 3.1. Kling Motion Control. Nano Banana. Sora 2 Pro. Seedance. One platform. One integration. One place to manage your reference images and character assets.

Without Arcads, the workflow falls apart immediately. You're managing separate accounts for each model, copying prompts between interfaces, re-uploading the same reference image six times across six platforms, losing character consistency every time you switch tools. The friction isn't just annoying — it breaks the automation. An agent can't run a vibe advertising pipeline if it has to log into five different dashboards.

With Arcads, Claude makes one API call and gets access to the full model stack. The agent decides which model fits each clip, passes the script and reference assets, and gets rendered output back. No tab switching. No manual uploads. No consistency loss.

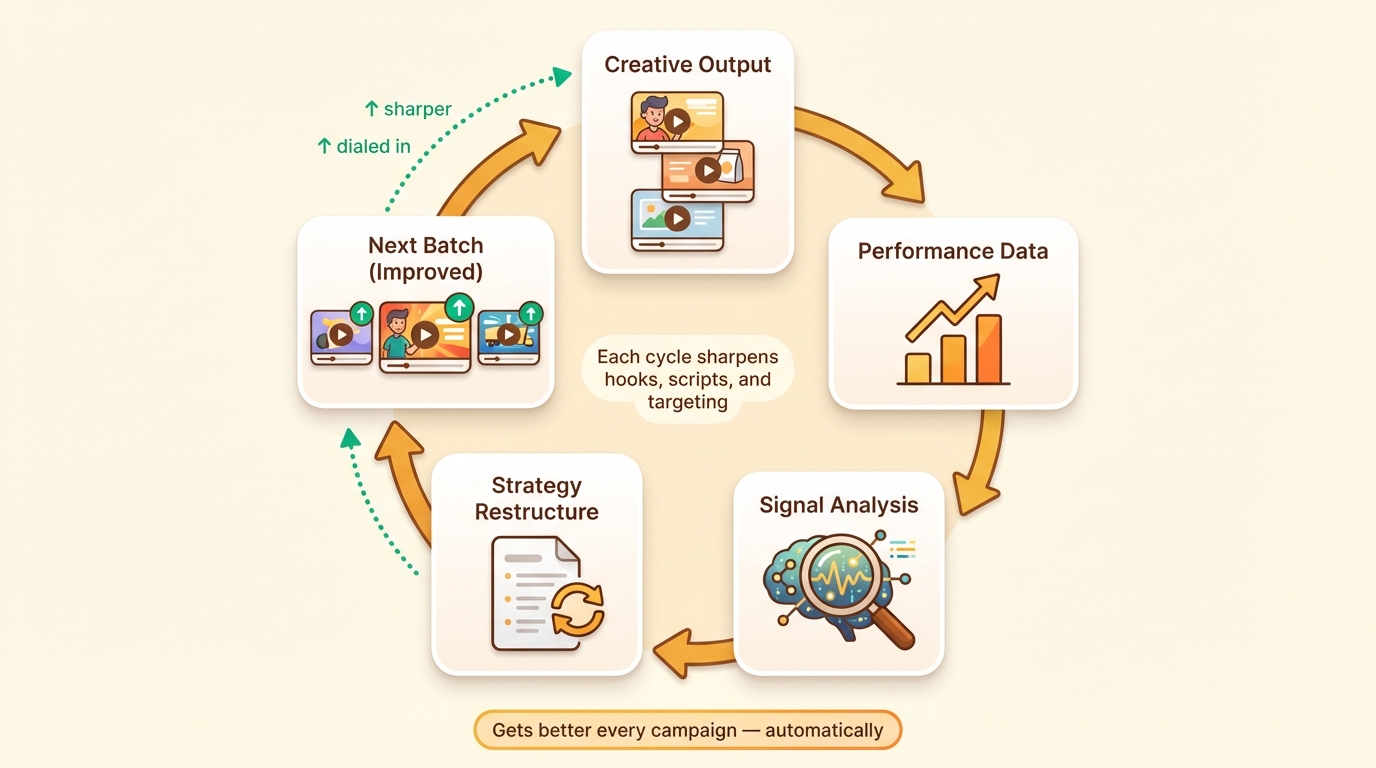

The Self-Improving Loop

The part of this that gets more valuable over time isn't the volume. It's the feedback structure.

After the first batch of clips posts and accumulates performance data, you feed the signals back into Claude:

- Which hooks got watched past three seconds

- Which scripts drove clicks

- Which awareness levels converted for this specific audience

- Which visual styles held attention

Claude restructures the next batch around what worked. Stronger hooks front-loaded. Underperforming awareness levels deprioritized or rewritten. Visual direction adjusted based on response.

Over multiple campaigns, the agent builds a working model of what converts for that product and customer. Creative output gets tighter each time. Hooks get sharper. Scripts get more dialed in.

Most agencies charge a monthly retainer to do this kind of creative analysis and iteration manually. In this setup, it's part of the loop. You're not paying for strategic overhead. It runs automatically.

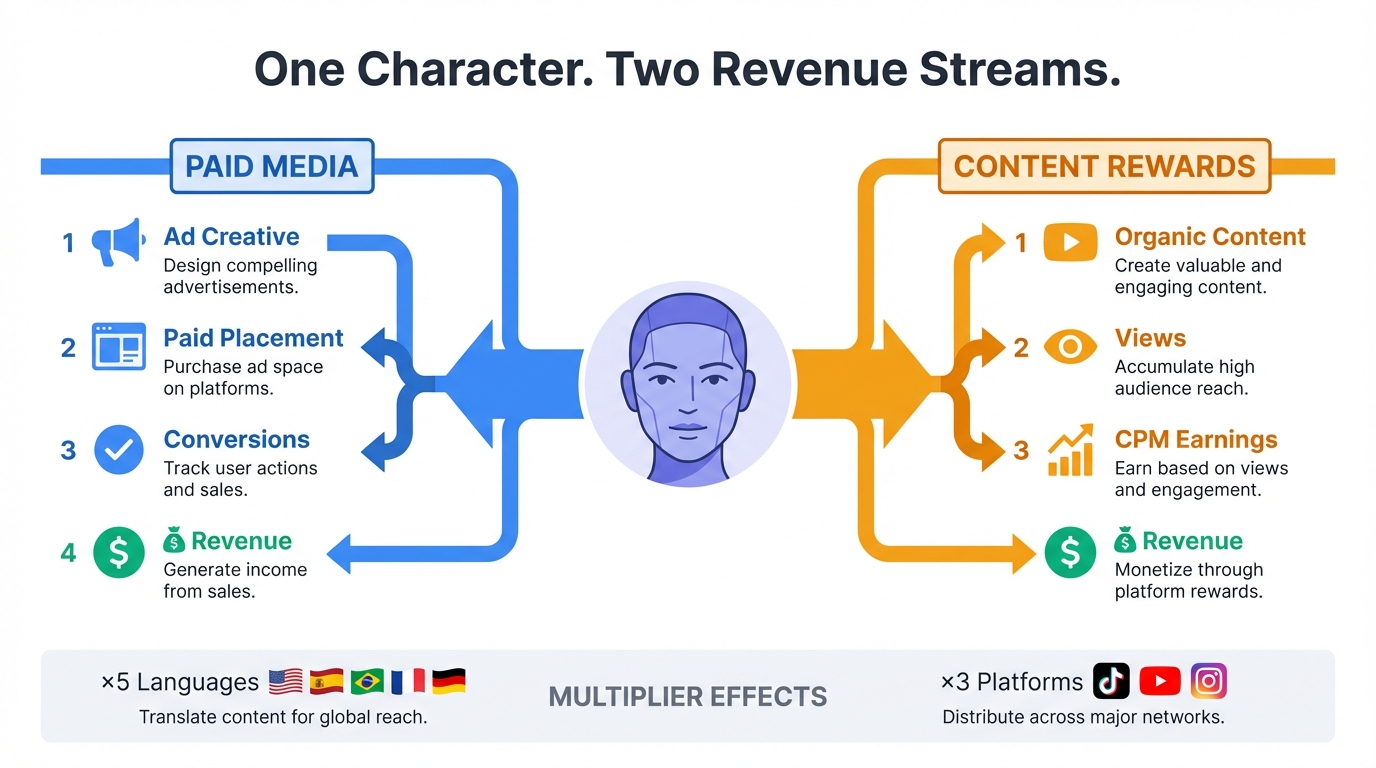

Content Rewards: A Second Revenue Stream

Here's where the pipeline gets interesting for operators who want more than one way to monetize the same infrastructure.

Content Rewards is not an ad platform. It's a pay-per-view system for organic content. Brands run campaigns on the platform; you post content that fits the brief in a way that feels native to the feed; it gets views; you get paid per thousand. No affiliate links. No sponsored post disclosures. No minimum follower count.

The distinction matters because content rewards requires a completely different creative approach than ad production:

- Ad creative is designed to convert. Tight hook, clear offer, direct CTA. Built for paid placements where you're paying for every impression and need a return.

- Content rewards content is designed to feel like it belongs in the feed. Educational, opinion-based, review-style, first-person narrative. The kind of content a real person would post because they genuinely wanted to share something. If it reads like an ad, it won't get views. No views, no pay.

Same character. Same pipeline. Completely different scripts — written for discovery and retention, not conversion.

The volume advantage still applies. Your AI influencer can post 20–30 pieces of organic-style content per week across TikTok, YouTube Shorts, and Reels simultaneously. Find active campaigns on Content Rewards, match the brief with content that actually feels native, and let the view count accumulate across platforms.

The multilingual angle compounds this further. One content brief, five language versions, five times the eligible audience. Brands running global campaigns on Content Rewards are looking for exactly this, because sourcing creators who can produce natural-feeling content in multiple languages is genuinely hard. The Arcads + Sora 2 workflow solves it in an afternoon.

Two revenue streams. One AI influencer. One pipeline.

Ad creative feeds paid media. Organic content feeds Content Rewards. The character runs both simultaneously, and neither one cannibalizes the other.

The Actual Shift

A year ago, AI UGC meant obvious CGI faces, wrong hand movements, audio that didn't match the lips.

Six months ago, it meant decent clips that fell apart at 1080p or the moment someone moved their hands.

Right now — with Claude Co-work running the strategy, Arcads routing to the right model, and Sora 2 Pro animating a locked reference image — you're producing content that most viewers cannot identify as AI on a first watch.

Add the self-improving feedback loop and you have a system that gets sharper every campaign it runs.

Add Content Rewards on the organic side and you have two monetization channels running off one character and one pipeline.

The tools are ready. The monetization infrastructure is ready. The only variable is whether you build it now or watch someone else corner the niche while you're still briefing individual shoots.

Get into Arcads. Connect Claude Co-work. Run the pipeline once. Look at what comes back.

That's the only argument left.

Tools used:

Tags

Related Skills

Agent Swarm

Multi-model task routing system that delegates work through distributed agent sessions.

Lanbow Meta Ads Orchestrator

End-to-end Meta advertising system covering strategy, creative generation, and campaign delivery.

OpenClaw Multi-Model Strategy and Optimization Techniques

介绍 OpenClaw 的多模型协作策略、本地部署方案、反向提示和 Vibe Coding 等实用技巧的集合

Related Articles

How I Built LennyRPG: A Masterclass in AI-Assisted Product Development

Ben Shih built a Pokémon-style RPG game using Claude Code, Codex, and ChatGPT — processing 300+ transcripts and generating 250+ avatars with CLI automation. His 6-step AI workflow reveals a blueprint any developer can steal.

How to Design Better Agent Skills

A practical guide for designing agent skills that trigger well, encode real gotchas, and include the scripts, hooks, and structure agents actually need.

Multi-Model Workflow Optimization with CCG

Strategies for coordinating multiple AI models (Claude, Gemini, Codex) to efficiently handle full-stack development tasks.