Lessons from Building Claude Code Skills: What Actually Works

A practical breakdown of the skill patterns, best practices, and team lessons Anthropic shared from building and using Claude Code skills at scale.

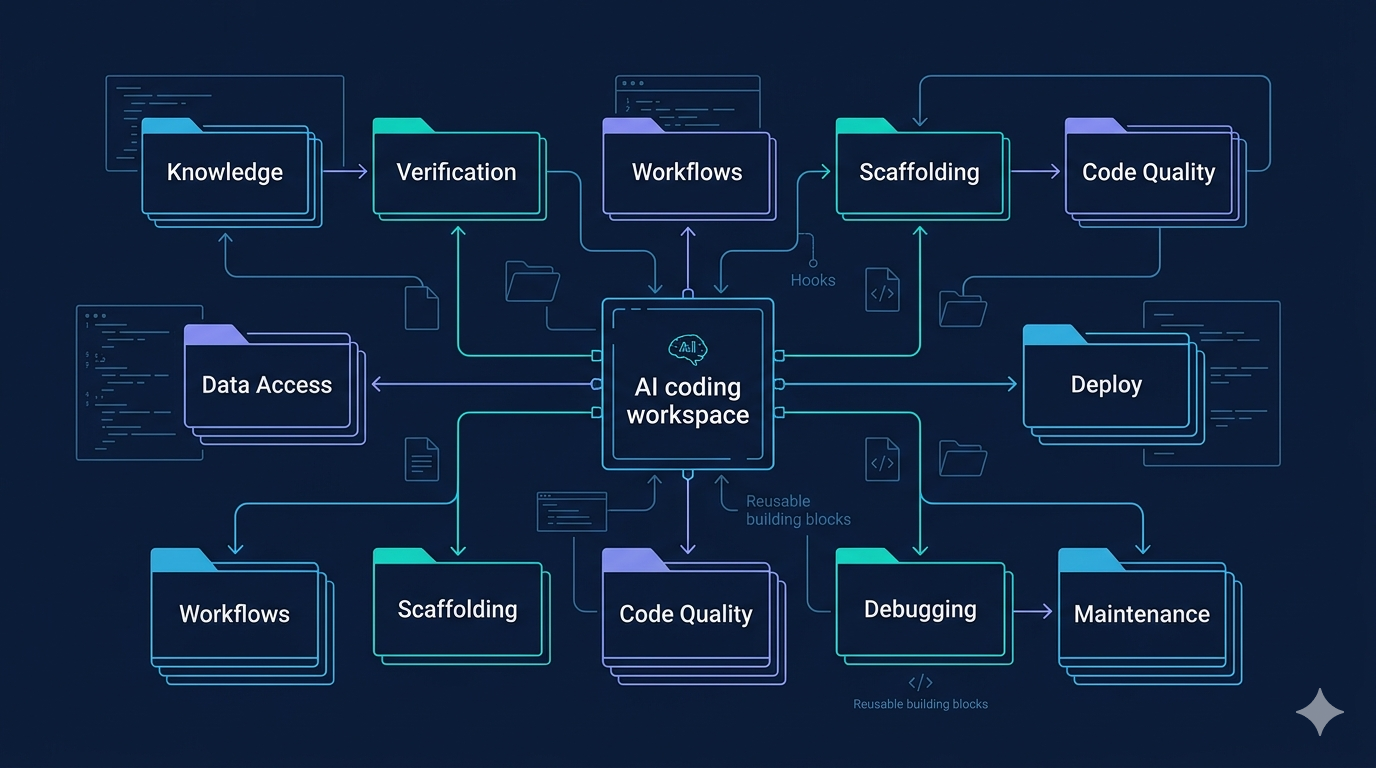

Claude Code skills are often dismissed as “just markdown files,” but that framing misses the whole game. The real power is that a skill is a folder: it can include scripts, references, assets, configuration, and even scoped hooks that only activate when needed. That turns skills from static instructions into reusable execution environments.

A recent post by Thariq distilled what Anthropic has learned from using hundreds of skills internally. The biggest takeaway is simple: the best skills are not generic. They are opinionated in the right places, modular in structure, and grounded in real failure modes rather than theory.

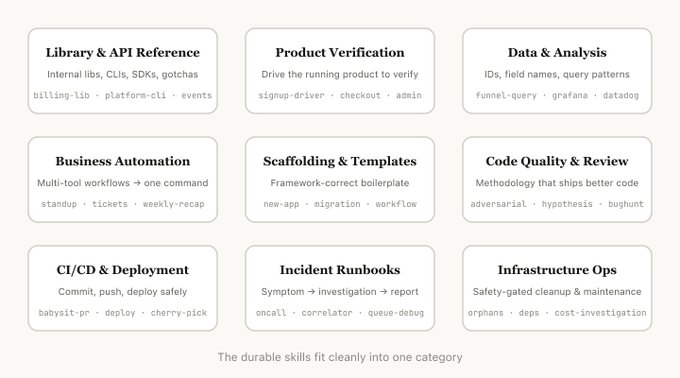

The 9 skill patterns worth understanding

The post groups successful skills into recurring categories. That framing is useful because it stops teams from building vague “do everything” skills that confuse the model.

1. Knowledge skills

These teach the agent how to use a library, internal CLI, SDK, or design system correctly. They work best when they include reference snippets and gotchas instead of repeating public docs.

2. Verification skills

These are some of the highest-leverage skills in any org. They tell the agent how to test whether its work actually functions, often using tools like Playwright, tmux, or assertion scripts.

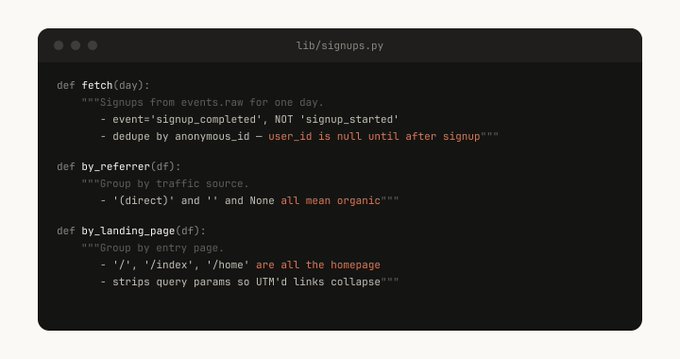

3. Data and monitoring skills

These connect the agent to dashboards, data sources, canonical tables, and query workflows so it can investigate with real context instead of guessing.

4. Workflow automation skills

These compress repetitive multi-step work into one reusable pattern, like standups, recap generation, or ticket creation.

5. Scaffolding skills

These generate framework-specific boilerplate that still needs natural-language judgment, such as app templates, handlers, migrations, or workflows.

6. Code quality skills

These enforce internal testing standards, review styles, or code conventions. They are especially strong when backed by deterministic scripts.

7. Deploy and release skills

These handle PR babysitting, deploy sequences, rollout checks, and rollback logic. In practice, these are where reusable operational guardrails shine.

8. Investigation skills

These start from symptoms — alerts, request IDs, error signatures — and walk through a repeatable debugging flow to produce a structured finding.

9. Maintenance skills

These cover routine operational chores, especially the risky ones: cleanup, dependency handling, or cost investigations. Good maintenance skills make destructive actions safer by adding explicit guardrails.

What makes a skill actually good

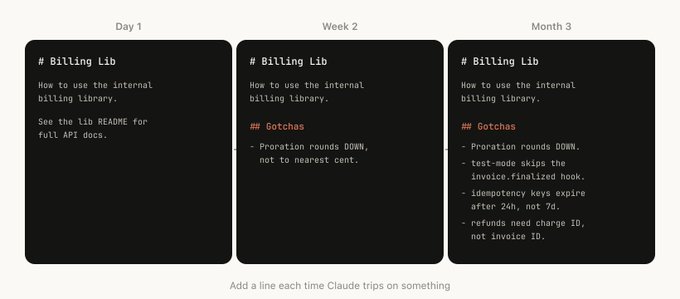

The strongest advice in the piece is that skills should push the model out of its default habits. Claude already knows a lot about coding. A useful skill adds what the model does not know by default:

- your internal edge cases

- your footguns

- your preferred workflows

- your real-world testing patterns

- your operational boundaries

That is why the gotchas section matters so much. If a skill does not encode repeated failure points, it is probably too generic to be valuable.

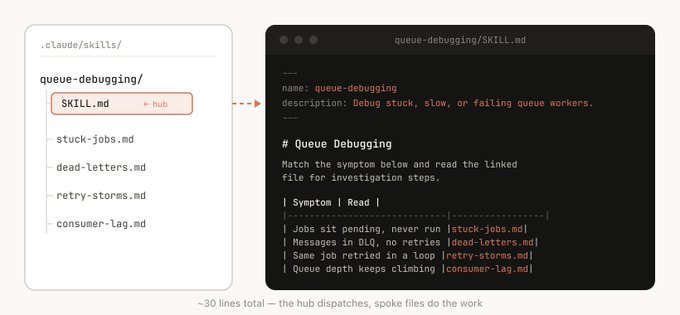

A skill is a folder, not a prompt

This is the part many teams still underuse. Skills can include:

- markdown references

- templates in assets/

- scripts and helper libraries

- examples

- config files for setup

- logs or stable data for lightweight memory

- scoped hooks that only run when the skill is invoked

That structure creates progressive disclosure. Instead of stuffing everything into one long instruction file, the agent can discover the right artifact at the right time.

Writing skills that trigger well

One subtle but important point: the description field is not a summary. It is a trigger definition. That means good skills are explicit about when they should be called, not just what they are about.

Code and hooks make skills much stronger

Another practical point from the post: one of the most powerful things you can give an agent is code. Scripts, libraries, and scoped hooks let the model spend time composing solutions instead of rewriting boilerplate every time.

The marketplace lesson

Not every skill should immediately become a shared marketplace asset. A healthier pattern is to let useful skills gain traction first, then promote them. Translation: your org probably does not need more skills. It needs fewer bad ones.

Related guide

If you want the practical playbook version of this post, read also: How to Design Better Agent Skills.

Source

Original post: https://x.com/trq212/status/2033949937936085378

Tags

Related Skills

Vercel Skills: LLM Agent Skills Package Manager

Command-line tool to install AI agent skills across Claude Code, Cursor, and other LLM platforms with local folder support.

Claude Skills - Professional AI Agent Skills Library

Open-source collection of 48 professional Claude skills with documentation, Python CLI tools, and templates for productivity automation.

Agent Dev Toolkit

Complete toolkit for building, automating, and monetizing AI agents with five core skills.

Related Articles

How to Design Better Agent Skills

A practical guide for designing agent skills that trigger well, encode real gotchas, and include the scripts, hooks, and structure agents actually need.

10 AI Agent and Developer Tools Worth Tracking This Week

A curated roundup of 10 AI agent, developer tooling, and runtime projects worth monitoring, split into actionable tools and broader trend signals.

Claude Code Plugin Pairing: ralph-loop with planning-with-files

Experience sharing on combining Claude Code's ralph-loop plugin with skills/planning-with-files for task completion and progress tracking.